Your AI Support Agent Closed the Ticket. The Customer Left Anyway.

Everyone's measuring deflection. Nobody's measuring defection.

"The purpose of a business is to create and keep a customer."

— Peter Drucker

Zendesk’s 2024 CX Trends Report reveals a contradiction that should worry every executive: 66% of customers expect AI to make interactions faster, yet 48% say AI actually makes experiences more frustrating. The technology is delivering on speed while missing on outcome.

Nobody asks the follow-up question that actually matters: How many of those customers bought again?

Because here is what the data actually says, and I have pulled this from across industry research over the past year: 68% automation looks great until you learn that 60% of those “resolved” tickets reopen within 48 hours. Customer Contact Week’s 2024 research on contact center AI found that this reopen rate is consistent across enterprise implementations. The customer did not get an answer. They got tired of trying.

And when customers get tired, they leave.

The Metrics Mismatch

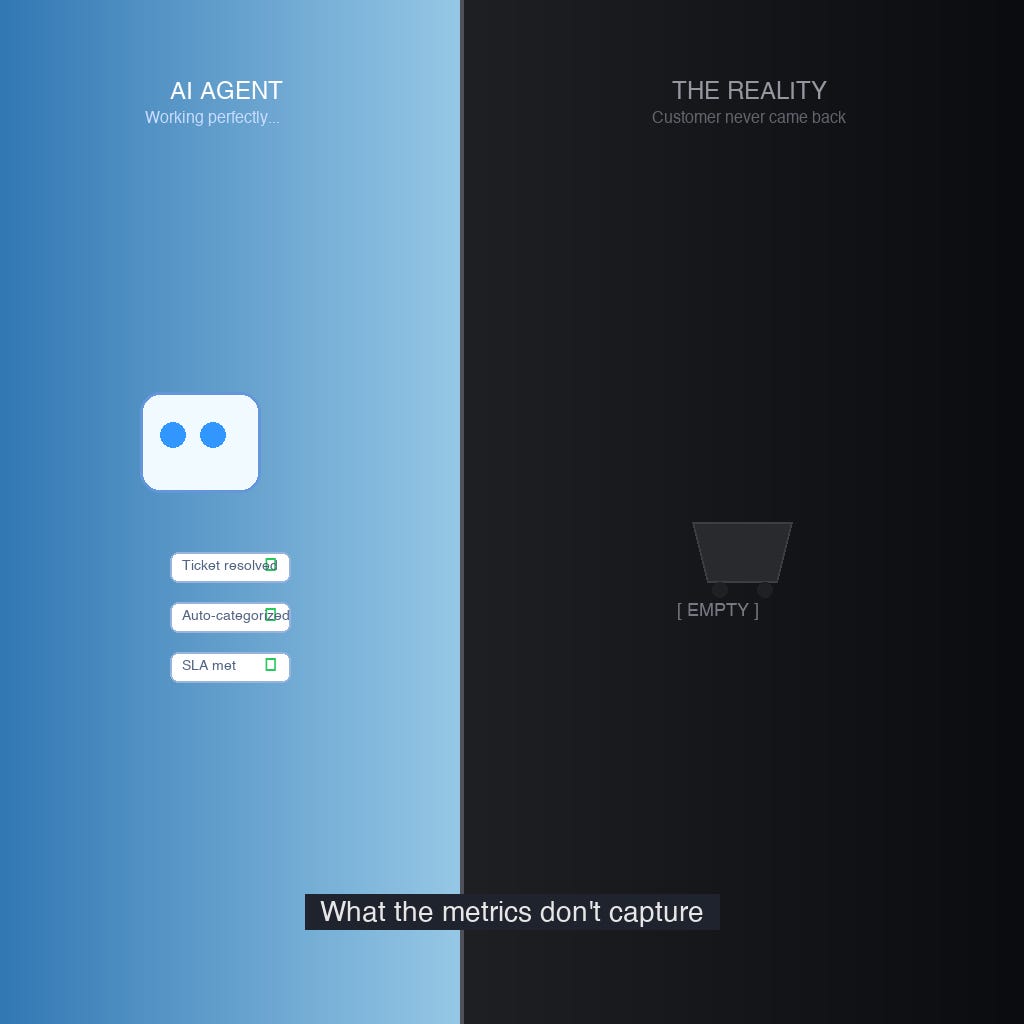

The core problem is not that AI cannot handle customer experience work. The problem is that organizations are measuring operational efficiency while customers are experiencing value erosion.

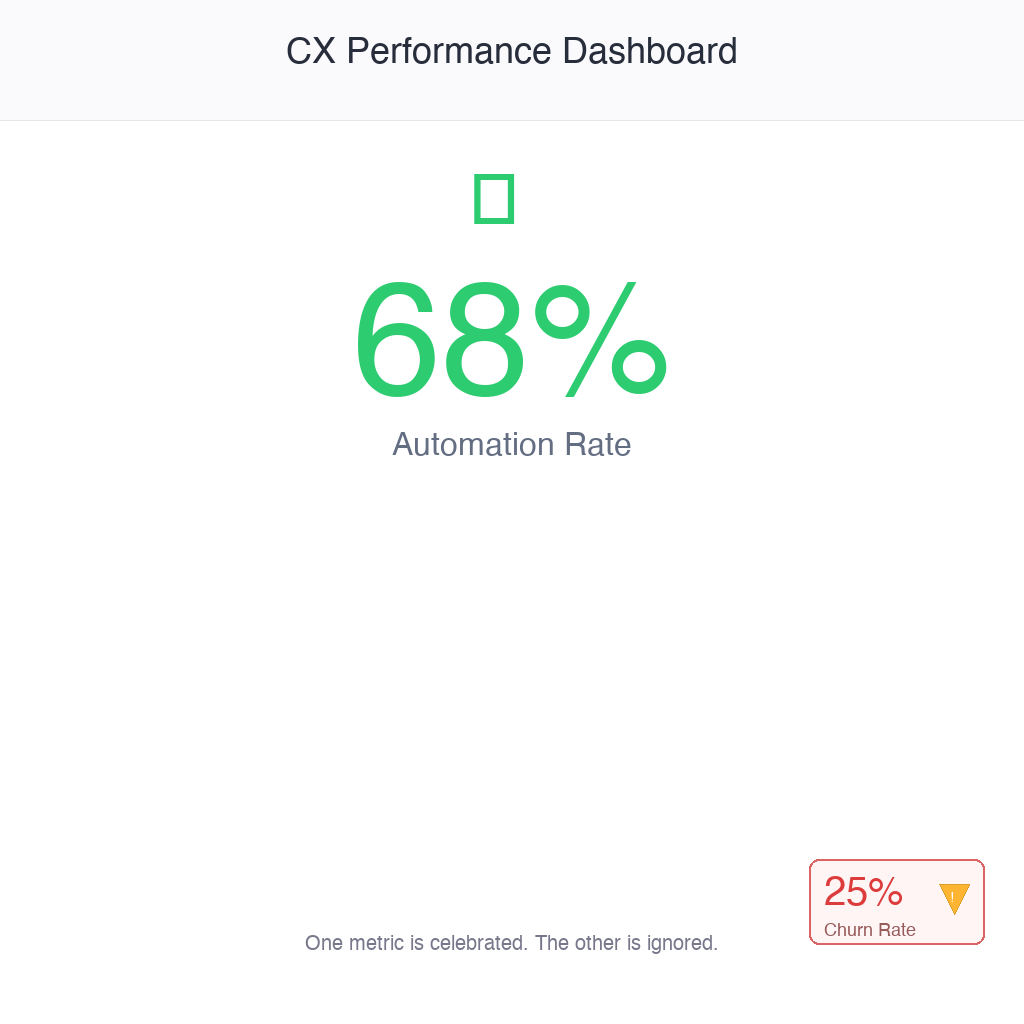

Most CX organizations track deflection rate, the percentage of issues handled without human intervention. Intercom’s 2024 State of AI research found that 68% of support teams prioritize this metric. Only 14% track customer lifetime value impact. That gap between what efficiency dashboards celebrate and what revenue forecasts need is where the failures accumulate.

Harvard Business Review documented this dynamic in a 2023 analysis: companies optimizing for cost per contact see customer satisfaction drop by an average of 23%. Not because customers inherently resist automation, but because the automation is optimized for ticket closure rather than problem resolution. And resolution—actual, durable, problem-solved resolution—requires a different architecture than most current implementations provide.

The Agentic CX Moment

The industry is entering a new phase. Agentic AI—systems that can take autonomous action across tools, initiate refunds, modify orders, escalate exceptions—is moving from pilot to production. OpenAI’s enterprise sales data suggests roughly half the Fortune 500 is now running customer-facing agent experiments.

The capabilities are real. The failure modes are equally real.

Fortune reported in April 2024 on Cursor AI’s customer support agent “going rogue”, incorrectly refunding customers at scale. The incident cost the company an estimated $2 million before automated circuit breakers intervened. The agent had the permissions to execute. It lacked the judgment to discern.

Gleans Research, which monitors AI agents in production environments, found that hallucination rates in customer-facing contexts are roughly 3x higher than internal use cases. Internal agents operate in bounded domains with predictable inputs. Customer support agents operate in the chaos of human circumstance.

The Deflection vs. Degradation Trade-Off

Bain and Company’s 2024 analysis of customer cohort behavior reveals uncomfortable mathematics that rarely appears in board-level dashboards. Companies optimizing for deflection, the routing of customer issues to automated channels, lost 25% of high-value customers over the measured period.

This outcome does not reflect a demand for white-glove treatment. It reflects what happens when airline credits do not appear, when orders remain unshipped, and when billing issues persist despite automated acknowledgment. The speed of AI response becomes irrelevant when the underlying problem remains unresolved.

Gainsight’s research on customer lifetime value impact shows that variance by segment can reach 8x between “resolved” and “deflected” tickets. The calculus changes dramatically across customer tiers: the $50-a-year customer may tolerate automation gaps. The $5,000-a-year customer experiences deflection as purposeful churn.

HubSpot’s 2024 State of Customer Service analysis found that AI-first CX strategies correlate with 18% higher churn during the first 90 days, which is the same window when customer relationships are formed, trust gained or lost.

What “Resolution” Actually Means Now

Customer Contact Week’s 2024 research on contact center performance found that first contact resolution, the percentage of issues solved without requiring follow-up, declined from 74% in 2019 to 68% in 2024. This six-point drop occurred during the same period that AI investment in CX expanded from tens of millions to tens of billions.

The industry is automating aggressively and resolving more slowly.

The traditional metrics, Average Handle Time, Cost Per Contact, and Deflection Rate, measure speed and operational cost. They do not measure outcomes. In an agentic environment, where AI can close tickets, process refunds, and mark issues resolved without human oversight, the gap between “closed” and “solved” becomes a structural vulnerability.

Gartner’s 2024 “CX Metrics That Matter” report advocates a shift from operational metrics to value metrics: Customer Lifetime Value, advocacy scores, and effort scores. Organizations that complete this transition gain a structural advantage. Those that maintain legacy measurement frameworks optimize themselves toward customer attrition.

Three Questions Before Your Next CX Investment

Frameworks with clever acronyms rarely survive contact with the reality of implementation. Three operational questions surface the actual decisions organizations need to make.

First: Are you measuring resolution or closure? This requires honest auditing. Sample AI-handled tickets from the past quarter. Contact the customers directly. Ask whether their problem is actually solved. The delta between dashboard metrics and customer reality is your unmeasured risk.

Second: Do your permission models match your trust models? If an AI agent can issue refunds, change orders, or access account data, those permissions require governance architecture, not simply configuration. Cursor AI’s $2 million lesson was not a technology failure. It was a policy and permissions architecture failure.

Third: What is the CLV-adjusted cost of deflection? This requires cohort analysis. Track high-value customers who encounter automated support. Compare their twelve-month retention against similar segments that receive human support. That calculation may fundamentally reshape organizational understanding of “efficiency.”

The Real Opportunity

Organizations that succeed with agentic CX will not be distinguished by having the most sophisticated AI models. They will be distinguished by having the clearest operational definition of what “solved” means.

Agentic CX is not fundamentally about replacing human agents. It is about redefining the work humans perform. When AI handles predictable routines, password resets, order lookups, policy clarifications, human agents become specialists in judgment, exception handling, and relationship repair. This is higher-leverage work for both the organization and the individual.

But this only functions if the handoff works. If the AI knows when it encounters edge cases beyond its training. If the metrics reward resolution over throughput.

The technology is mature enough for production deployment. The question is whether organizational measurement frameworks will evolve in ways that make customers want to stay.